table of contents

Security teams don’t need a perfect model to make better calls. They need a clear one. Security fix prioritization gets easier when every issue is judged by risk, exposure, and effort, not by whoever speaks loudest.

For lean teams, that matters. A small backlog can still hide a public exploit, a weak control, or a patch that eats two days of work. A simple matrix keeps the noise down and the response sharp, and it doesn’t take longer than the fix itself.

Why a matrix beats gut feel

Severity scores help, but they don’t tell the full story. A “critical” flaw on a sealed test box is not the same as a medium flaw on a public login page. Context changes the answer fast.

That is why modern triage starts with what is known, what is exposed, and what attackers are already using. When CISA posts a new KEV alert, the clock starts because active exploitation is part of the story. OpenSSF makes the same point in its guide on runtime context: live asset data beats raw vulnerability counts.

If a fix is public, active, and cheap to change, it belongs at the top of the queue.

A matrix gives you a shared language. That matters when a CTO, an engineer, and an IT manager all look at the same ticket.

Build a simple scoring model

Start with four risk factors and one effort score. Keep the scale small so people will use it. If the scoring takes longer than the fix discussion, it is too complex.

| Factor | 1 | 2 | 3 |

|---|---|---|---|

| Internet exposure | internal only | limited access | public or partner-facing |

| Exploit signal | no known abuse | proof of concept or chatter | known exploited vulnerability |

| Business impact | low | moderate | sensitive data, auth, or revenue path |

| Compensating controls | strong controls | partial controls | none or weak controls |

Add the four risk scores for a total from 4 to 12. Then score remediation effort from 1 to 3, where 1 is a small config change and 3 is a cross-team rollout. A one-point effort score should mean one engineer can finish it in a day. A three-point score should mean testing, coordination, or rollback risk.

That gives you a two-part view, risk and cost. A fix with a high risk total and low effort should rise first. A low-risk, high-effort item can wait unless the context changes. Wiz says the same thing in its vulnerability prioritization guide, context matters more than severity alone.

Read the matrix in plain English

The quadrants should map to action, not theory. If the team cannot turn a score into a decision, the matrix has failed.

- High risk, low effort goes into the current sprint or emergency window.

- High risk, high effort gets an owner, a target date, and a temporary control.

- Low risk, low effort can batch into the next normal patch cycle.

- Low risk, high effort usually waits.

This is where compensating controls matter. A WAF, network segment, feature flag, or MDM policy can lower pressure while the team schedules the real fix. That is useful when a patch needs testing, vendor approval, or downtime.

If a workaround is needed, write it in the ticket. Don’t leave it in Slack. Otherwise the team loses the control before the next standup.

The point is simple. You are not ranking bugs in a vacuum. You are ranking fixes inside a live environment.

A worked example with three real-world fixes

Here is a small-team example. The same matrix pushes these issues into different lanes.

| Issue | Key signals | Priority |

|---|---|---|

| Public-facing VPN appliance with a KEV-listed auth bug | internet exposed, active exploitation, no useful control, effort 1 | Fix now |

| Internal reporting app with a medium-severity library flaw | auth required, behind WAF, limited data exposure, effort 2 | Schedule next sprint |

| Dev dependency issue in a non-production service | no internet exposure, no KEV signal, strong access controls, effort 3 | Defer and revisit |

The VPN issue wins because exposure and active abuse push the risk score up fast. The reporting app sits lower because the controls reduce blast radius. The dev dependency waits because its fix costs more than the current risk justifies.

This is the kind of tradeoff lean teams need. It also makes the order easy to explain to a product manager or an executive. Nobody has to guess why one ticket moved ahead of another.

Put the matrix into backlog grooming and patch management

Use the matrix in weekly grooming, before anyone estimates story points. Start by pulling in new findings, KEV items, and internet-facing assets. Then score each item with the same rules every time. Keep the scoring rubric in the ticket template, so people don’t rebuild the logic each week.

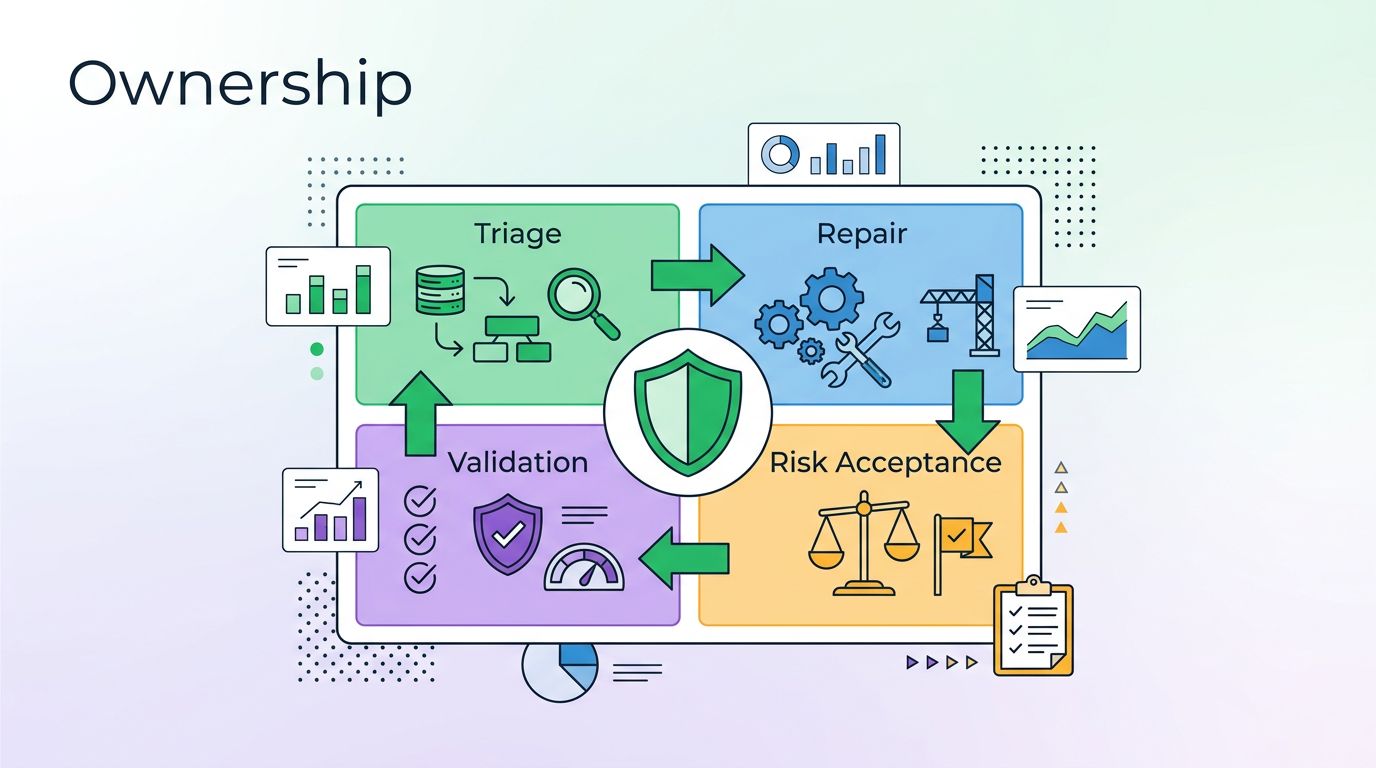

- Triage new findings in one short review block.

- Assign risk scores based on exposure, exploit signal, impact, and controls.

- Add effort scores using the engineer who will do the work.

- Turn the result into one action, fix now, schedule, mitigate, or accept.

- Re-score when the environment changes, because a new control or exposure can move the fix.

For patch management, the matrix helps with windows and exceptions. A public KEV with low effort should jump into the next available slot. A harder fix can wait for a maintenance window, as long as the team sets a date and a backup control.

The best matrix is the one your team can apply in five minutes, then defend in front of an engineer or a manager.

If your team wants help turning this into a repeatable process, Book a Discovery Call with Bud Consulting.

Keep the list short enough to use

Lean teams do better with fewer inputs and firmer rules. A security fix prioritization matrix should highlight public exposure, known exploited vulnerabilities, compensating controls, and remediation effort without turning into a spreadsheet contest.

Used well, it keeps the team focused on the fixes that cut real risk. That is the whole point, especially when the queue is full and time is short.