table of contents

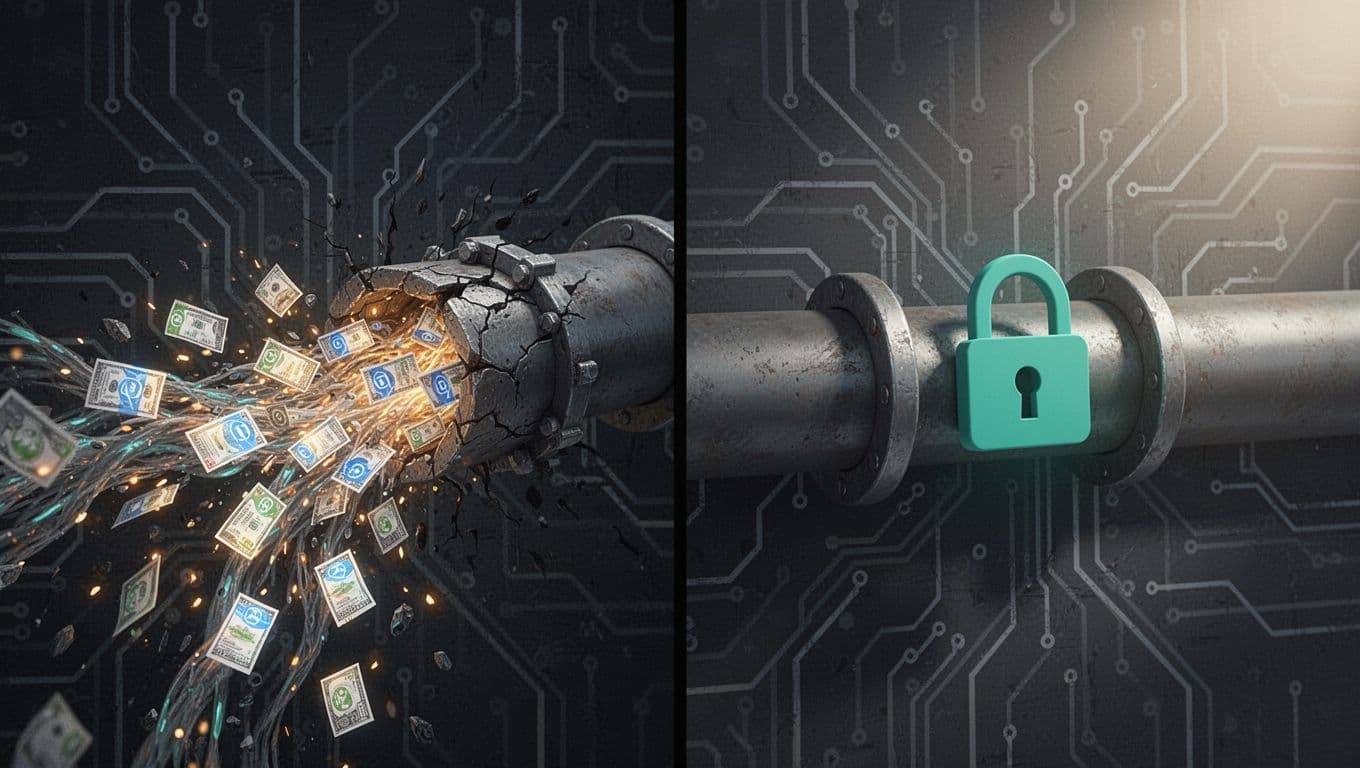

Attackers love exposed AI APIs. They spot public endpoints or leaked keys and turn them into data dumps or massive bills. You run AI services, so these risks hit close to home.

Recent breaches prove the point. In April 2026, hackers used Vercel’s APIs to grab environment variables after compromising a worker account. Other cases, like the Lilli chatbot, exposed data through 22 unauthenticated endpoints. These incidents cost millions and show why you need better management.

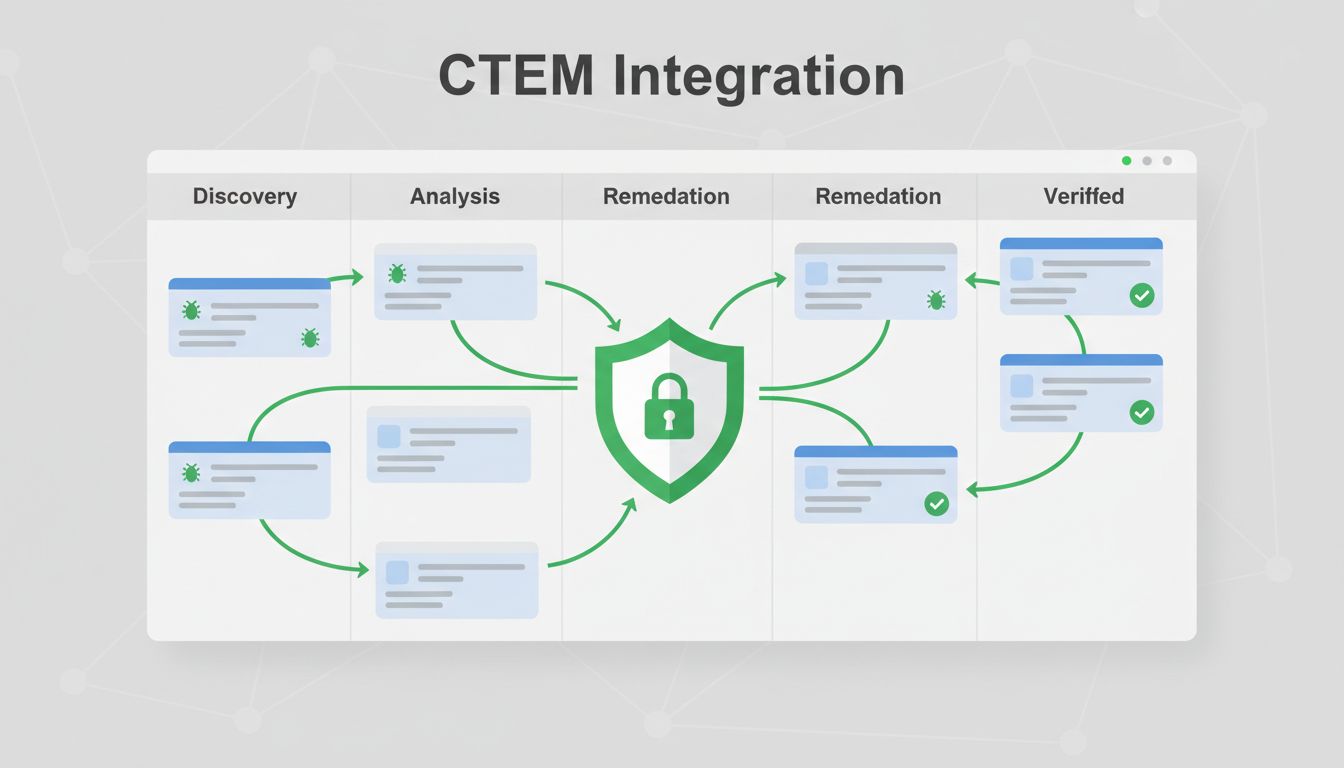

CTEM changes that. It goes beyond scans to continuous discovery, validation, and fixes. Let’s walk through playbooks that fit your CTEM program.

Why Exposed AI APIs Demand CTEM Attention

Exposed AI APIs include public inference endpoints, misconfigured gateways, and leaked credentials. They differ from standard APIs because they handle sensitive prompts, model outputs, and agent actions. One weak spot lets attackers query proprietary models or exfiltrate training data.

Traditional vulnerability management scans for CVEs and patches code. CTEM focuses on real-world exploitability. It maps your attack surface, tests exposures live, and prioritizes based on business impact. For AI, that means checking if an endpoint reaches the internet and what data it touches.

Consider rate limits and abuse potential. An open endpoint without throttling invites spam queries that rack up costs or poison models. Business criticality matters too. An exposed RAG backend pulling customer data ranks higher than a test inference server.

You already know scans miss context. CTEM adds validation, like attempting real API calls from outside your network. This approach caught issues in the Google Gemini API key exposures, where client-side keys granted access to private data.

Detection Playbooks for Exposed AI APIs

Start with automated discovery. Use tools like Shodan or cloud asset inventories to find AI-specific ports and services. Look for common fingerprints: /v1/chat/completions paths for OpenAI-compatible endpoints or WebSocket handshakes for agents.

Build a detection checklist. First, query public registries for your domains and IPs. Next, scan for open ports 80, 443, 8080. Then, probe with curl for AI headers like x-ai-model or content-type: application/json with prompt payloads.

Here’s sample logic for your playbook:

| Check | Command Example | Positive Indicator |

|---|---|---|

| Open inference endpoint | curl -X POST https://yourapi.com/v1/completions | Returns JSON with model output |

| Leaked credentials | grep -r “sk-.*openai” /path/to/repos | Matches API key patterns |

| Unsecured RAG backend | curl https://yourapi.com/retrieve?query=test | Exposes vector store results |

| Exposed agent tools | nmap -p- target | Ports 8000, 11434 open (common for Ollama, LangChain) |

Run this weekly. Integrate with your SIEM for alerts. One scan might reveal overly permissive service accounts binding to 0.0.0.0.

Focus on internet-reachable assets first. Internal APIs matter less until attackers pivot inside.

Prioritizing Exposed AI API Risks

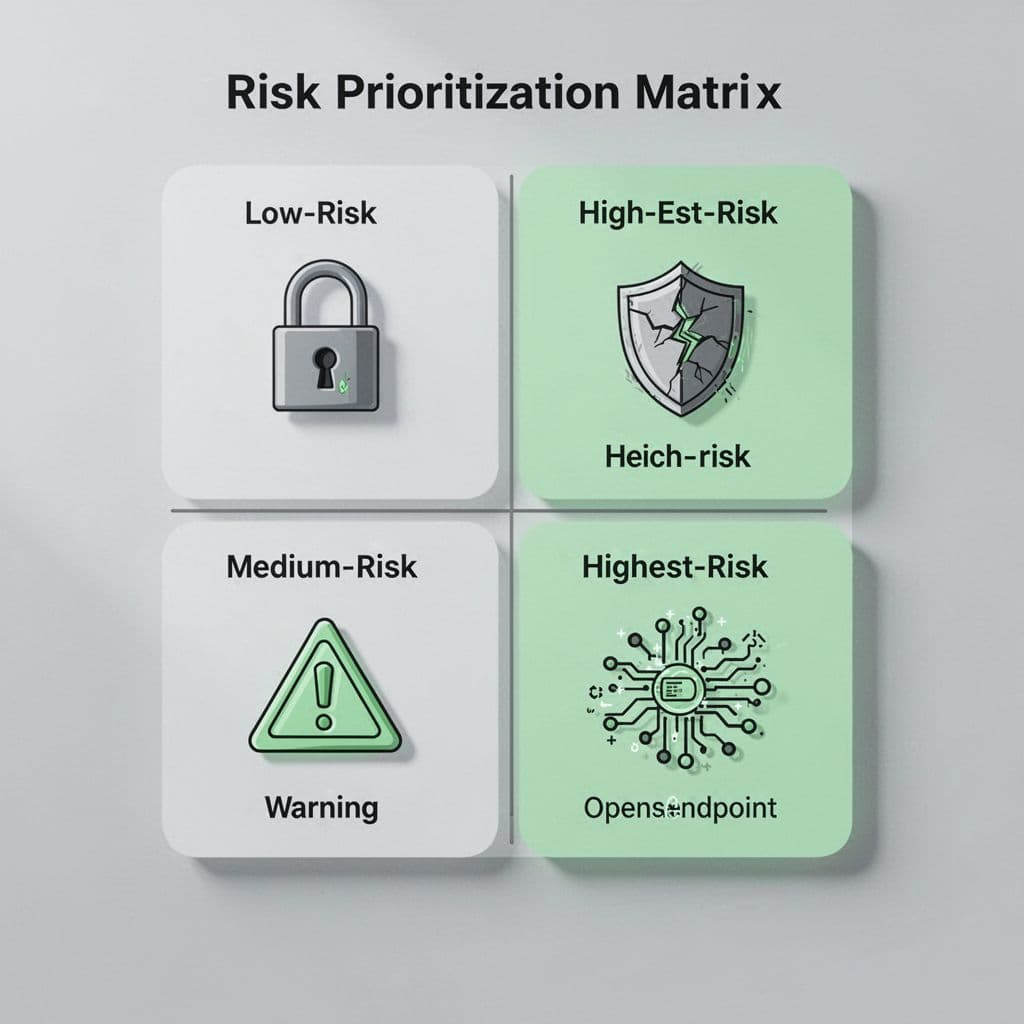

Not all exposures need urgent fixes. Prioritize with factors like reachability, auth strength, and data sensitivity. Score them on a matrix to guide your team.

High risk hits if the API faces the public internet, lacks auth, and processes PII or proprietary models. Medium risks have weak rate limits or agent integrations. Low ones stay firewalled but monitor them.

Create a simple scoring system. Assign points: +3 for public IP, +2 for no auth, +1 per sensitive data type (prompts, embeddings). Total over 5? Mobilize now.

For example, a misconfigured gateway with OAuth but no scopes scores lower than a leaked Anthropic key. Model capabilities factor in too. Fine-tuning endpoints beat basic chat ones for priority.

This beats CVSS scores, which ignore context. Clawctl’s 2026 research on 42,665 exposed agents found 93% exploitable due to poor prioritization. Use their table of vulns like plaintext keys (89%) to refine yours.

Validation Techniques Tailored to AI Services

Discovery finds candidates. Validation confirms exploitability. Send test payloads to mimic attackers without harm.

For public endpoints, POST a benign prompt: {“model”: “gpt-4”, “messages”: [{“role”: “user”, “content”: “hello”}]}. Success means no auth blocks it. Check responses for leaks, like conversation history.

Test leaked credentials by rotating them in a safe project. Query Gemini or Claude APIs. If they work, assume public copies do too.

For RAG backends, attempt retrieval on known vectors. Agent tools? Connect via WebSocket and list tools. Tools like HackerOne’s CTEM framework automate this with safe emulation.

Distinguish from vuln mgmt here. Scanners flag broken auth; CTEM tries it. Run validations daily on high-priority assets. Log paths for SOC review.

Remediation Steps in Your CTEM Playbook

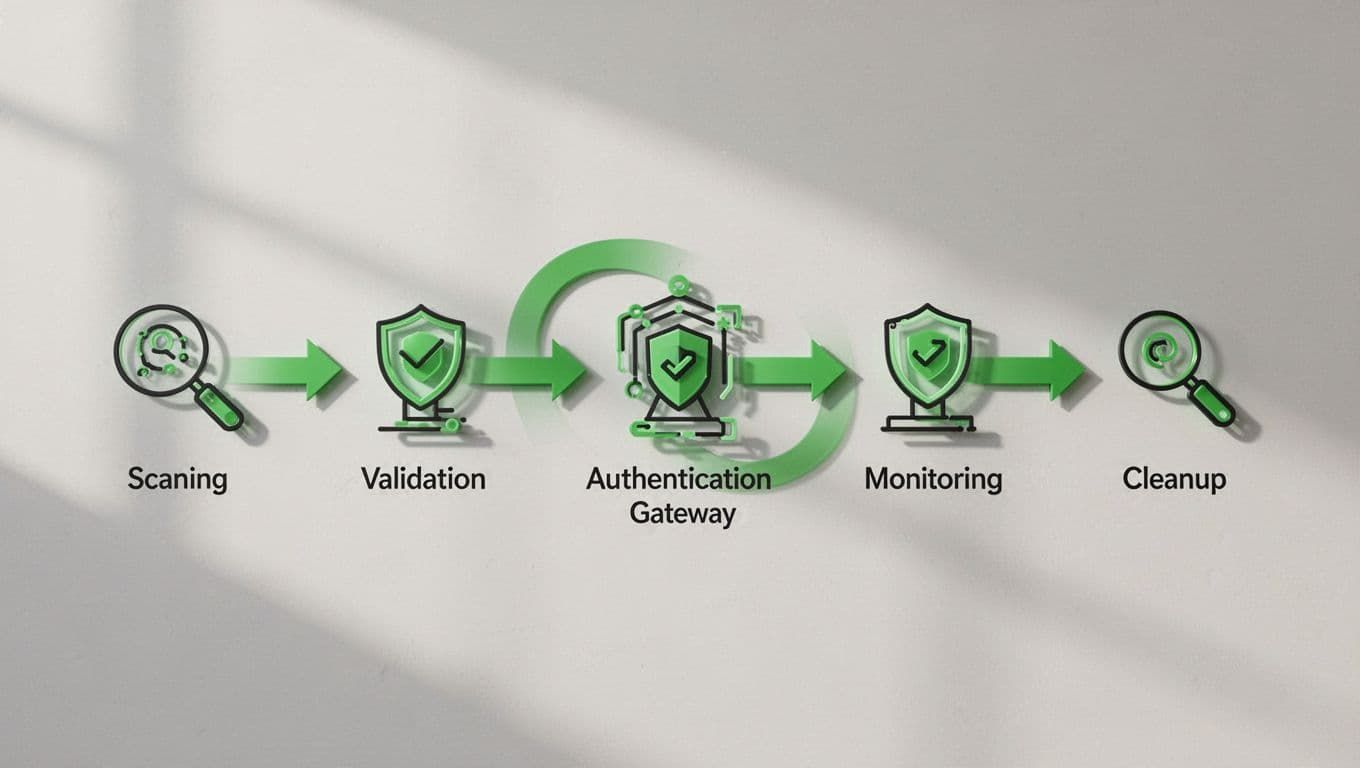

Fixes follow a sequence: isolate, auth up, monitor. Don’t just patch; prevent recurrence.

Step 1: Block public access. Firewall the endpoint or add IP whitelists.

Step 2: Deploy an API gateway like Kong or AWS. Enforce OAuth 2.0, JWT validation, and rate limits (e.g., 100 RPM).

Step 3: Rotate keys and audit service accounts. Revoke permissive IAM roles.

Step 4: Add logging and WAF rules for AI payloads. Block prompt injection attempts.

Step 5: Revalidate post-fix. Repeat discovery.

Test in staging first. For agents, govern MCP endpoints with explicit allow lists. The State of API Security 2026 report stresses broken auth fixes like these cut risks fast.

Real-World Examples of Exposed AI APIs

Breaches illustrate playbook needs. January 2026 saw Moltbook expose 1.5 million AI agent keys via a hardcoded Supabase key in JS.

Google’s issue let Maps keys hit Gemini data. Attackers copied keys from sites and queried private info. Google now scopes keys tightly.

Clawctl found 42,000 agents with no auth, leaking chats on handshake. One had full shell access.

Vercel’s April breach used deep API knowledge post-compromise. Lilli’s 22 open endpoints let AI agents roam free.

These show patterns: weak auth (78%), key leaks (89%). Your playbook counters them directly.

Integrating CTEM into AI Operations

Embed playbooks in pipelines. Hook discovery to CI/CD for new deploys. Use Strobes CTEM platform style automation for asset mapping.

Train teams on AI risks. Run red team exercises quarterly. Track metrics: time to detect, fix rate.

For cloud architects, audit Kubernetes services. No LoadBalancer for inference unless gated.

SOC teams, alert on anomalous queries like high-volume generations. AppSec, scan repos for keys.

Scale with AI-driven validation, as in Terra Security’s guide. It keeps pace with shadow AI.

Key Takeaways for Your CTEM Program

Exposed AI APIs threaten data and costs, but CTEM playbooks tame them through continuous cycles. Focus on detection checklists, risk matrices, and sequenced fixes.

Build yours now. Test weekly, prioritize ruthlessly, and validate everything. Teams that do this stay ahead of 2026 threats.

If gaps persist, book a discovery call with Bud Consulting to strengthen your program.

(Word count: 2487)